"....But there was a another big issue which is a government agency in the US called ITAR which is the international traffic and arms regulations and they're the government agency responsible for making sure weapons aren't shipped around willy-nilly. And this shipment from China, it was definitely going to get flagged. And so the workound that I did was I shipped all the missiles disassembled. And I made sure that the sculptures were fully non-functioning missiles so that I wouldn't be breaking any international or US laws. And that worked."

"....But there was a another big issue which is a government agency in the US called ITAR which is the international traffic and arms regulations and they're the government agency responsible for making sure weapons aren't shipped around willy-nilly. And this shipment from China, it was definitely going to get flagged. And so the workound that I did was I shipped all the missiles disassembled. And I made sure that the sculptures were fully non-functioning missiles so that I wouldn't be breaking any international or US laws. And that worked."

Michael Lukyniuk has added a photo to the pool:

My previous posting of a lily of the valley plant as a watercolour sketch was partially an experiment to create a simple design that I could carve onto a piece of linoleum and print. I was able to draw and cut the design reasonably quickly; after all, it's only 10 cm in diameter. This is the result. Since the carving was as interesting as the print, I've included both. My impression of linocut printing so far is that my results aren't as detailed as my ink drawings and watercolours - perhaps because of the size of the image and undoubtedly my skill level. It's also an adjustment to conceive a black and white image from a carving My plan is to continue trying, perhaps on larger sized sheets of linoleum. To be continued ...

Linoprint of linoleum mounted on a board with Speedball ink, 10 cm (4 inches) in diameter.

Ma précédente article, représentant un muguet sous forme d'aquarelle, était en partie une expérience visant à créer un motif simple que je pourrais graver sur une plaque de linoléum et imprimer. J'ai pu dessiner et graver le motif assez rapidement ; après tout, il n'était que 10 cm de diamètre. Voici le résultat. La gravure étant aussi intéressante que l'impression, je les ai incluses toutes les deux. Mon impression de la linogravure jusqu'à présent est que mes résultats ne sont pas aussi détaillés que mes dessins à l'encre et mes aquarelles – peut-être à cause de la taille de l'image et sans doute aussi de mon niveau de compétence. Concevoir une image en noir et blanc à partir d'une gravure demande également un temps d'adaptation. Je compte continuer à expérimenter, peut-être sur des plaques de linoléum plus grandes. À suivre ...

Linogravure sur linoléum sur carton, encre Speedball, 10 cm (4 pouces) de diamètre.

Sean Strickland claims he was not cleared to attend the UFC event because he ‘made fun of Israel and Epstein’

The only current US UFC champion says he has been barred from Sunday’s fight card on the south lawn of the White House because he dared to criticize Donald Trump, Israel and Jeffrey Epstein.

On Tuesday night, middleweight champion Sean Strickland wrote on X that he had been informed by the Ultimate Fighting Championship that he had not been cleared on attend the event by the White House.

Continue reading...The lowest ever viewing figures, an identity crisis for the show and a confusing Billie Piper-based cliffhanger – whoever takes on the BBC fantasy drama has quite the job on their hands …

The announcement that the BBC has abandoned the planned Doctor Who Christmas special, and is ending its partnership with showrunner Russell T Davies and Bad Wolf production company, will not have come as much of a surprise to many fans. It has been rumoured for some time. Aside from the gossip, the fact that no filming appeared to have taken place for a programme that traditionally requires a lengthy post-production process had already suggested something was up.

The BBC has said the show remains an important part of its portfolio, stating it wants to ensure that “when the Tardis lands once more, it does so in all its glory”. While it isn’t inconceivable that Bad Wolf might bid to make the show under a new regime, Davies appears to have hung up his Tardis keys for good, posting on Instagram: “Now I’m as excited as anyone to see what comes next!”

Continue reading...Een tiental Nederlandse datacenters hield dinsdag een open dag. Kleine datacenters zijn heel anders dan die Amerikaanse hyperscales, is een van de boodschappen. En bovendien zijn ze cruciaal.

/s3/static.nrc.nl/wp-content/uploads/2026/06/10154422/100626VER_2034337795_data.jpg)

The teaser trailer for the sequel to David Fincher’s The Social Network is here — they’re calling the movie “a companion piece” to the first film. It’s based on The Facebook Files:

Primarily, the reports revealed that, based on internally commissioned studies, the company was fully aware of negative impacts on teenage users of Instagram, and the contribution of Facebook activity to violence in developing countries. Other takeaways of the leak include the impact of the company’s platforms on spreading false information, and Facebook’s policy of promoting inflammatory posts. Furthermore, Facebook was fully aware that harmful content was being pushed through Facebook algorithms reaching young users. The types of content included posts promoting anorexia nervosa and self-harm photos.

Jeremy Strong nails Zuckerberg’s voice & mannerisms. The hint of Reznor/Ross at the end is great, though it looks like Alexandre Desplat is doing the music this time around. Aaron Sorkin, who wrote the screenplay for the first film, writes and directs. Out in theaters October 9th.

Tags: Facebook · movies · The Social Reckoning · trailers · video

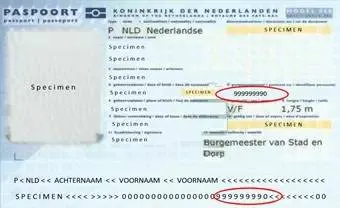

De Autoriteit Persoonsgegevens (AP) vindt dat de Belastingdienst geen burgerservicenummers (BSN's) meer mag meesturen bij betalingen, bijvoorbeeld op betaalbestanden naar banken of op overzichten die burgers en leveranciers onder ogen krijgen. Volgens de toezichthouder is die verwerking onnodig en in strijd met de Algemene Verordening Gegevensbescherming (AVG). De fiscus moet zijn systemen aanpassen en BSN's voortaan weglaten of afschermen, meldt Het Financieele Dagblad.

Het BSN is een uniek persoonsnummer dat overheidsorganisaties gebruiken om iemand eenduidig te identificeren. Gebruik mag alleen als dat noodzakelijk is voor een wettelijke taak én een specifieke wettelijke basis heeft, zo bevestigt de Rijksoverheid. Onnodige verspreiding, bijvoorbeeld via bankafschriften of generieke betaalbestanden, vergroot het risico op identiteitsfraude.

Het is niet de eerste keer dat de AP de fiscus terugfluit. Eerder oordeelde de toezichthouder dat het verwerken van BSN's in btw-nummers van zzp'ers in strijd was met de wet. Toen de Belastingdienst onvoldoende doorpakte, gelastte de Rechtbank Amsterdam dat het BSN vóór 1 januari 2020 uit het btw-nummer moest verdwijnen, aldus Taxlive.

Het toezicht is structureel: sinds 2024 loopt er een formeel toezichtarrangement van de AP bij de Belastingdienst, en in het jaarplan voor 2026 is "BSN in betaalkenmerk/vorderingskenmerk" expliciet als project benoemd, blijkt uit een Kamerbrief van Financiën. De juridische risico's zijn aanzienlijk: bij ernstige AVG-overtredingen kunnen boetes oplopen tot € 20 miljoen of 4 procent van de wereldwijde jaaromzet.

Voor burgers betekent het oordeel dat hun BSN minder vaak ongevraagd "meereist" met betalingen en bankafschriften. Voor de fiscus betekent het opnieuw een verbouwing van IT-systemen die toch al kraken onder achterstallig privacy-onderhoud.

De vraag is intussen wrang: hoe vaak moet een toezichthouder hetzelfde zeggen voordat de overheid zelf haar privacyregels serieus neemt?

Allemaal even lachen om de sukkels van landgenoten die zo stom zijn om op een fatbike te rijden en zo stom zijn dat in het Amsterdamse Vondelpark te doen, dan ook nog zo stom zijn zich te laten pakken door boa's en bovendien zo stom zijn zich zelfs te laten beboeten door boa's. Maar geen slecht woord over de fatbikeboa's, want die laten ons vandaag de wonderschone kanten van handhaving (kan ook elders) zien. Fatbikes zijn namelijk al een maandje verboten in het Vondelpark, en na eerst een beetje aftasten en waarschuwen blijkt nu dat 39 fatbikesukkels tussen 25 mei en 8 juni boetes tot 115 euro hebben gekregen. Justice for niet-fatbikers. Eindelijk is het Vondelpark weer weer van óns.